News

2025.10.3 Talk at SPARKS ACM SIGGRAPH

2025.8.11 Heron's Soundscape, Vancouver

2025.1.20 Music Ally Connect SI:X Award

2023.4.14 AI Music Talk at A3E, NAMM

2022.10.24 Seminar Talk at UCSB

2022.4.1 Interview with BT for "Tails" Reverb

2021.10.14 Interview with Ted's Little Dream

2021.4.19 Interview in Synmag, first International Issue

2017.9.9 Voice of Sisyphus, Datumsoria, ZKM, Germany

2016.7.7 VR Soundscapes, SBCAST, Santa Barbara

2016.6.2 Virtual-Reality VJ Set, SBCAST, Santa Barbara

2016.5.22 Virtual-Reality VJ Set, Spectrum Infrared, Los Angeles

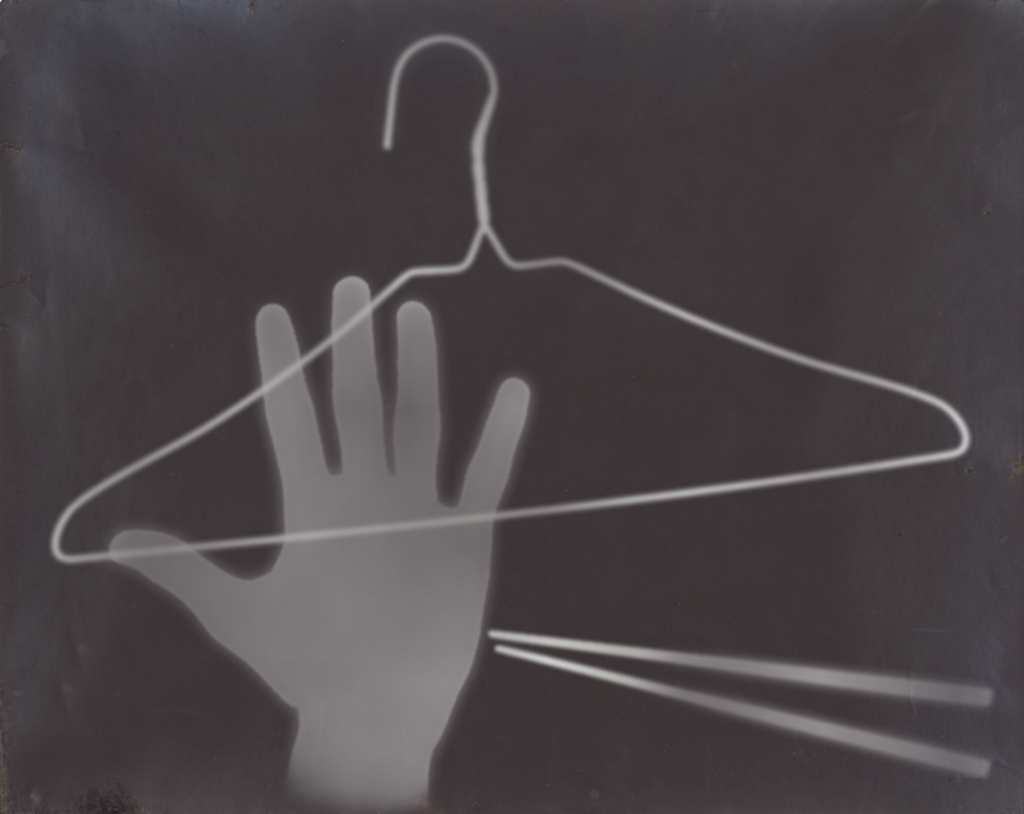

2015.7.5 Moholy-Nagy Photogram Studio App, Santa Barbara Museum of Art

2015.6.15 Voice of Sisyphus at Chronus Art Center, Shanghai, China

2014.11.9 Voice of Sisyphus at IEEE VIS 2014, Paris, France

2013.10.27 Delacroix Exhibition iPad App, Santa Barbara Museum of Art

2013.05.26 Drip at NIME, Daejeon, Korea

2013.03.15 Standing Waves presented in the AlloSphere, Santa Barbara

2012.12.07 Drip at pixxelpoint, Nova Gorica, Slovenia2012.09.07 Voice of Sisyphus at Nature Morte Gallery, Berlin, Germany

2012.09.01 Drip at Soundwalk Festival, Long Beach

2012.06.19 Talk at ICAD, Atlanta

2012.05.29 Drip at EoYS, Santa Barbara

2012.05.29 Kinematica at EoYS, Santa Barbara

2012.04.29 Pantograph on Kinetics Radio

2012.05.15 Talk at MAT, Santa Barbara

2011.11.03 Voice of Sisyphus at Edward Cella Gallery, Los Angeles

Music

Music