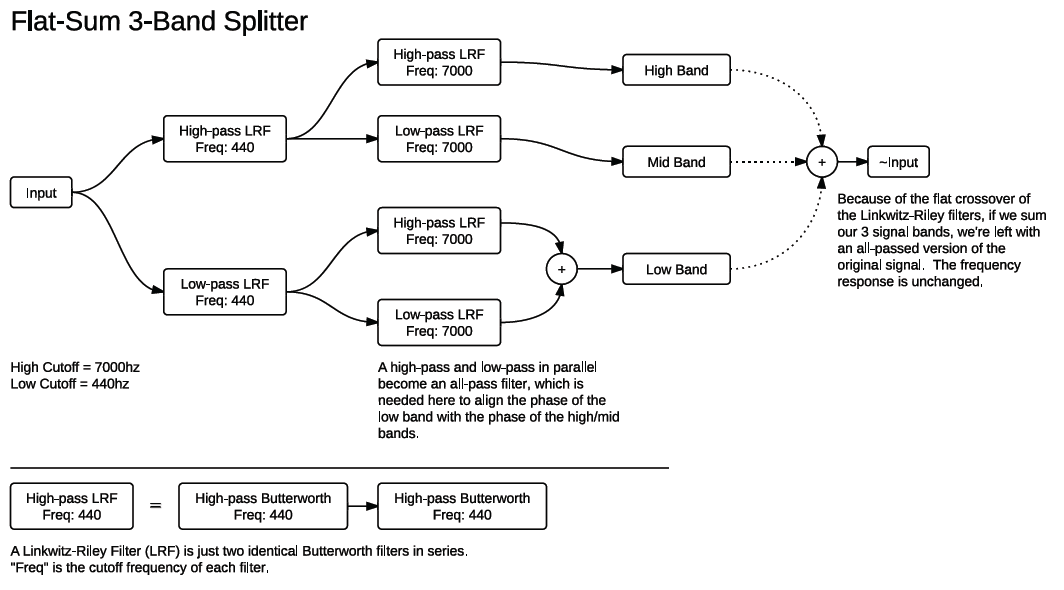

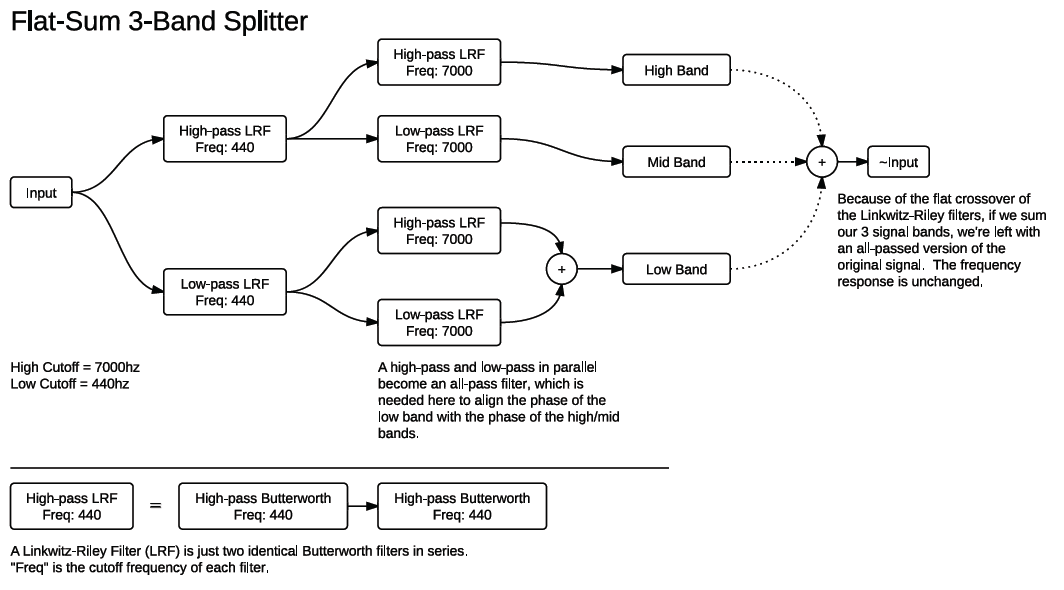

If you’re trying to split an audio signal into three frequency bands without using a costly FFT, the Linkwitz-Riley Crossover technique is a great solution. A Linkwitz-Riley filter is just two identical butterworth filters in series (because of that it’s sometimes called the “Butterworth Squared” filter), which has the unique identity of a perfectly flat crossover point. This means that if you pass a signal through a low-pass and a high-pass version and add the result, you’ll basically get back your original signal (all-passed).

This is a schematic of a 3-band splitter using this principle. Basically, one audio signal is being split in half and then split in half again, giving us 3 separate bands. The only weird thing on the diagram is the two filters in parallel on the low band. These are necessary to align the phase of the bottom with the top two bands. Every time a signal passes through one of these LR filters it gets its phase shifted, so if the bottom band only goes through one filter and the top two bands go through two (one initial and one after the split) then they’ll be out of phase with the bottom and you get bad dips and peaks in the frequency response.

Many, many thanks to Robin Schmidt, a.k.a. RS-MET for help with this tutorial.

Music

Music